A practical approach to Kalman filter and how to implement it

I have for a long time been interrested in Kalman filers and how they work, I also used a Kalman filter for my Balancing robot, but I never explained how it actually was implemented. Actually I had never taken the time to sit down with a pen and a piece of paper and try to do the math by myself, so I actually did not know how it was implemented.

It turned out to be a good thing, as I actually discovered a mistake in the original code, but I will get back to that later.

I actually wrote about the Kalman filter as my master assignment in high school back in December 2011. But I only used the Kalman filter to calculate the true voltage of a DC signal modulated by known Gaussian white noise. My assignment can be found in the following zip file: http://www.tkjelectronics.dk/uploads/Kalman_SRP.zip. It is in danish, but you can properly use google translate to translate some of it. If you got any specific questions regarding the assignment, then ask in the comments below.

Okay, but back to the subject. As I sad I had never taken the time to sit down and do the math regarding the Kalman filter based on an accelerometer and a gyroscope. It was not as hard as I expected, but I must confess that I still have not studied the deeper theory behind, on why it actually works. But for me, and most people out there, I am more interrested in implementing the filter, than in the deeper theory behind and why the equations works.

Before we begin you must have some basic knowledge about matrices like multiplication of matrices and transposing of matrices. If not then please take a look at the following websites:

- http://en.wikipedia.org/wiki/Matrix_multiplication#Matrix_product_.28two_matrices.29

- http://www.mathwarehouse.com/algebra/matrix/multiply-matrix.php

- http://en.wikipedia.org/wiki/Transpose

- http://en.wikipedia.org/wiki/Covariance_matrix

For those of you who do not know what a Kalman filter is, it is an algorithm which uses a series of measurements observed over time, in this context an accelerometer and a gyroscope. These measurements will contain noise that will contribute to the error of the measurement. The Kalman filter will then try to estimate the state of the system, based on the current and previous states, that tend to be more precise that than the measurements alone.

In this context the problem is that the accelerometer is in general very noise when it is used to measure the gravitational acceleration since the robot is moving back and forth. The problem with the gyro is that it drifts over time – just like a spinning wheel-gyro will start to fall down when it is losing speed.

In short you can say that you can only trust the gyroscope on a short term while you can only trust the accelerometer on a long term.

There is actually a very easy way to deal with this by using a complimentary filter, which basicly just consist of a digital low-pass filter on the accelerometer and digital high-pass filter on the gyroscope readings. But it is not as accurate as the Kalman filter, but other people have succesfully build balancing robots using a fine-tuned complimentary filter.

More information about gyroscopes, accelerometer and complimentary filters can be found in this pdf. A comparison between a complimentary filter and a Kalman filter can be found in the following blog post.

The Kalman filter operates by producing a statistically optimal estimate of the system state based upon the measurement(s). To do this it will need to know the noise of the input to the filter called the measurement noise, but also the noise of the system itself called the process noise. To do this the noise has to be Gaussian distributed and have a mean of zero, luckily for us most random noise have this characteristic.

For more information about the theory behind the filter take a look at the following pages:

- http://en.wikipedia.org/wiki/Kalman_filter

- http://www.cs.unc.edu/~welch/media/pdf/kalman_intro.pdf

- http://academic.csuohio.edu/simond/courses/eec644/kalman.pdf

The system state

The next of this article might seem very confusing for some, but I promise you if you grab a pen and a piece of paper and try to follow along it is not that hard if you are reasonable at math.

If you, like me, do not have a calculator or computer program that can work with matrices, then I recommend the free online calculator Wolfram Alpha. I used it for all the calculations in this article.

I will use the same notation as the wikipedia article, but I will like to note that when the matrixes are constants and does not depend on the current time you do not have to write the k after them. So for instance can be simplified to

.

Also I would like to write a small explanation of the other aspects of the notations.

First I will make a note about whats called the previous state:

Which is the previous estimated state based on the previous state and the estimates of the states before it.

The next is the a priori state:

A priori means the estimate of the state matrix at the current time k based on the previous state of the system and the estimates of the states before it.

The last one is called a posteriori state:

Is the estimated of the state at time k given observations up to and including at time k.

The problem is that the system state itself is hidden and can only be observed through observation . This is also called a Hidden Markov model.

This means that the state will be based upon the state at time k and all the previous states. That also means that you can not trust the estimate of the state before the Kalman filter has stabilized – take a look at the graph at the front page of my assignment.

The hat over the means that is the estimate of the state. Unlike just a single

which means the true state – the one we are trying to estimate.

So the notation for the state at time k is:

The state of the system at time k if given by:

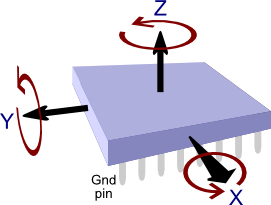

Where is the state matrix which is given by:

As you can see the output of the filter will be the angle but also the bias

based upon the measurements from the accelerometer and gyroscope. The bias is the amount the gyro has drifted. This means that one can get the true rate by subtracting the bias from the gyro measurement.

The next is the matrix, which is the state transition model which is applied to the prevouis state

.

In this case is defined as:

I know that the might seem confusing, but it will make sense later (take a look at my comment).

The next is the control input , in this case it is the gyroscope measurement in degrees per second (°/s) at time k, this is also called the rate

. We will actually rewrite the state equation as:

The next thing is the matrix. Which is called the control-input model, which is defined as:

This makes perfectly sense as you will get the angle when you multiply the rate

by the delta time

and since we can not calculate the bias directly based on the rate we will set the bottom of the matrix to 0.

is process noise which is Gaussian distributed with a zero mean and with covariance

to the time k:

is the process noise covariance matrix and in this case the covariance matrix of the state estimate of the accelerometer and bias. In this case we will consider the estimate of the bias and the accelerometer to be independent, so it’s actually just equal to the variance of the estimate of the accelerometer and bias.

The final matrix is defined as so:

As you can see the covariance matrix depends on the current time k, so the accelerometer variance

and the variance of the bias

is multiplied by the delta time

.

This makes sense as the process noise will be larger as longer time it is since the last update of the state. For instance the gyro could have drifted.

You will have to know these constants for the Kalman filter to work.

Note if you set a larger value, the more noise in the estimation of the state. So for instance if the estimated angle starts to drift you have to increase the value of . Otherwise if the estimate tends to be slow you are trusting the estimate of the angle too much and should try to decrease the value of

to make it more responsive.

The measurement

Now we will take a look at the observation or measurement of the true state

. The observation

is given by:

As you can see the measurement is given by the current state

multiplied by the

matrix plus the measurement noise

.

is called the observation model and is used to map the true state space into the observed space. The true state can not be observed. Since the measurement is just the measurement from the accelerometer,

is given by:

The noise of the measurement have to be Gaussian distributed as well with a zero mean and as the covariance:

But as is not a matrix the measurement noise is just equal to the variance of the measurement, since the covariance of the same variable is equal to the variance. See this page for more information.

Now we can define as so:

More information about covariance can be found on Wikipedia and in my assignment.

We will assume that the measurement noise is the same and does not depend on the time k:

Note that if you set the measurement noise variance too high the filter will respond really slowly as it is trusting new measurements less, but if it is too small the value might overshoot and be noisy since we trust the accelerometer measurements too much.

So to round up you have to find the the process noise variances and

and the measurement variance of the measurement noise

. There are multiple ways to find them, but it is out of the aspect of this article.

The Kalman filter equations

Okay now to the equations we will use to estimate the true state of the system at time k . Some clever guys came up with equations found below to estimate the state of the system.

The equations can be written more compact, but I prefer to have them stretched out, so it is easier to implement and understand the different steps.

Predict

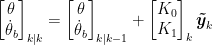

In the first two equations we will try to predict the current state and the error covariance matrix at time k. First the filter will try to estimate the current state based on all the previous states and the gyro measurement:

That is also why it is called a control input, since we use it as an extra input to estimate the state at the current time k called the a priori state

The next thing is that we will try to estimate the a priori error covariance matrix based on the previous error covariance matrix

, which is defined as:

This matrix is used to estimate how much we trust the current values of the estimated state. The smaller the more we trust the current estimated state. The principle of the equation above is actually pretty easy to understand, as it is pretty obvious that the error covariance will increase since we last updated the estimate of the state, therefore we multiplied the error covariance matrix by the state transition model and the transpose of that

and add the current process noise

at time k.

The error covariance matrix in our case is a 2×2 matrix:

Update

The fist thing we will do is to compute the difference between the measurement and the a priori state

, this is also called the innovation:

The observation model is used to map the a priori state

into the observed space which is the measurement from the accelerometer, therefore the innovation is not a matrix

The next thing we will do is calculate whats called the innovation covariance:

What it does is that it tries to predict how much we should trust the measurement based on the a priori error covariance matrix

The bigger the value of the measurement noise the larger the value of , this means that we do not trust the incoming measurement that much.

In this case is not a matrix and is just written as:

The next step is to calculate the Kalman gain. The Kalman gain is used to to indicate how much we trust the innovation and is defined as:

You can see that if we do not trust the innovation that much the innovation covariance will be high and if we trust the estimate of the state then the error covariance matrix

will be small the Kalman gain will therefore be small and oppesite if we trust the innovation but does not trust the estimation of the current state.

If you take a deeper look you can see that the transpose of the observation model is used to map the state of the error covariance matrix

into observed space. We then compare the error covariance matrix by multiplying with the inverse of the innovation covariance

.

This make sense as we will use the observation model to extract data from the state error covariance and compare that with the current estimate of the innovation covariance.

Note that if you do not know the state at startup you can set the error covariance matrix like so:

Where

For my balancing robot I know the starting angle and I find the bias of the gyro at startup by calibrating, so I assume that the state will be known at startup, so I initialize the error covariance matrix like so:

Take a look at my calibration routine for more information.

In this case the Kalman gain is a 2×1 matrix:

Now we can update the a posteriori estimate of the current state:

This is done by adding the a priori state

Remember that the innovation

A little simplified the equation can be understood as we simply correct the estimate of the a priori state , that was calculated using the previous state and the gyro measurement, with the measurement – in this case the accelerometer.

The last thing we will do is update the a posteriori error covariance matrix:

Where is called the identity matrix and is defined as:

What the filter is doing is that it is basically self-correcting the error covariance matrix based on how much we corrected the estimate. This make sense as we corrected the state based the a priori error covariance matrix , but also the innovation covariance

.

Implementing the filter

In this section I will use the equation from above to implement the filter into a simple c++ code that can be used for balancing robots, quadcopters and other applications where you need to compute the angle, bias or rate.

In case you want the code next to you, it can be found at github: https://github.com/TKJElectronics/KalmanFilter.

I will simply write the equations at the top of each step and then simplify them after that I will write how it is can be done i C and finally I will link to calculations done in Wolfram Alpha in the bottom of each step, as I used them to do the calculation.

Step 1:

As you can see the a priori estimate of the angle is is equal to the estimate of the previous state

plus the unbiased rate times the delta time

.

Since we can not directly measure the bias the estimate of the a priori bias is just equal to the previous one.

This can be written in C like so:

angle += dt * rate;

Note that I calculate the unbiased rate, so it can be be used by the user as well.

Wolfram Alpha links:

Step 2:

The equations above can be written in C like so:

P[0][1] -= dt * P[1][1];

P[1][0] -= dt * P[1][1];

P[1][1] += Q_gyroBias * dt;

Note that this is the part of the code that there was an error in in the original code that I used.

Wolfram Alpha links:

Step 3:

The innovation can be calculated in C like so:

Wolfram Alpha links:

Step 4:

Again the C code is pretty simple:

Wolfram Alpha links:

Step 5:

Note that in other cases can be a matrix and you can not just simply divide

by

. Instead you have to calculate the inverse of the matrix. See the following page for more information on how to do so.

The C implementation looks like this:

K[1] = P[1][0] / S;

Wolfram Alpha links:

Step 6:

Yet again the equation end up pretty short, and can be written as so in C:

bias += K[1] * y;

Step 7:

Remember that we decrease the error covariance matrix again, since the error of the estimate of the state has been decreased.

The C code looks like this:

float P01_temp = P[0][1];

P[0][0] -= K[0] * P00_temp;

P[0][1] -= K[0] * P01_temp;

P[1][0] -= K[1] * P00_temp;

P[1][1] -= K[1] * P01_temp;

Wolfram Alpha links:

Note that I have found that the following variances works perfectly for most IMUs:

float Q_gyroBias = 0.003;

float R_measure = 0.03;

Remember that it’s very important to set the target angle at startup if you need to use the output at startup. For more information, see the calibration routine for my balancing robot.

In case you missed it here is the library I wrote a library that can be used by any microcontroller that supports floating math. The source code can be found at github: https://github.com/TKJElectronics/KalmanFilter.

If you prefer a video explanation about the Kalman filter, I recommend the following video series: http://www.youtube.com/watch?v=FkCT_LV9Syk.

Note that you can not use the library if you need to represent something in a full 3D orientations, as euler angles suffer from what is called Gimbal lock you will need to use Quaternions to do that, but that is a whole nother story. For now take a look at the following page.

This is all for know, I hope that you will find i helpfull, if you do or have any questions fell free to post a comment below – it supports LaTeX syntax as well, if you need to write equations.

If you spot any errors please let me know as well.

Thanks for the great article and for the reference to filter.pdf (my document from ages ago…). This is definitely one of the better explanations I’ve seen about making a Kalman filter for angle estimation based on accelerometer/gyro data.

What do you think about the idea of pre-computing the P, S, and K values? They should converge independent of the states and measurements. In the steady-state, it seems like you would be back to a complementary filter with K[0]’s steady-state value and a simple bias estimator with K[1]’s steady-state. Of course, having the computation done in real-time opens up more possibilities (covariance reset, EKF, etc.).

@Shane

Thank you very much. I must say that your pdf and the project details about your balancing robot were a big inspiration too, when I started to look into building a balancing robot.

I see you point. They should end up being constants after some time, as they don’t depend on any incoming data from either gyroscope or accelerometer.

It might be interesting to run the numbers in Matlab and see what P, S, and K end up with. That might be material for a future blog post.

I look forward to it! I’ve been trying to compare the two filters for a long time and this has helped a lot. I just never had a simple enough presentation of the Kalman filter approach to try.

What is the delta-T in your implementation?

@Shane

Okay. I will try to hopefully start working with Matlab in the coming weeks and then I will let you know what conclusion I come up with.

Delta t is the time since the calculation was last performed. See these lines at the example code: https://github.com/TKJElectronics/KalmanFilter/blob/master/examples/KalmanExample/KalmanExample.ino#L45-46.

Great explanation! Many people complain about Kalman Filters, but this surely will help them.

About @Shane’s comment, many industry applications use constant values for the Kalman gain (K), as it converges if the process is stationary. I tried it myself and works very nicely, specially if the processor is not very powerful.

Also, being a Control Engineer, I’d like to add a minor detail: the gimbal lock occurs because of a mechanical characteristic of the system, independently of the mathematical representation. It reflects as a loss of degree of freedom in the Euler angles representations and a singularity in the Jacobian. But the same problem happens with quaternions. Although quaternions don’t suffer from the singularity, and therefore are able to keep tracking the angles, the mechanical gimbal lock still occurs. Think about a robotic arm and you’ll see that sometimes the one of the wrists has to move very (almost infinitely) fast. That occurs because of the singularity in the Euler angles used to build the robot (which can be reflected using Euler angles in the formulation), but will happen even if we use quaternions for tracking (which will be able to track correctly, but the speed will be infinite). Unfortunately, we can’t build robot arms based on quaternions. The problem is in the inverse dynamics.

Finally, from the point of view of filtering, the gimbal lock can only be observed through Euler angles, but from the point of view of control, it can happen because of the mechanics of the robot.

This is the greatest article on this topic I’ve ever seen!

But could you please make a .pdf version too? Or, did I missed the link?

@YS

I have now generated a pdf version of the article. It can be found in the bottom of the article.

@Conrado

Thank you for your clarification! The true is that I have not looked into the theory of quaternions as I have never needed to use them, so it’s nice to get some feedback from people like you!

Thank thee very much!!! I spend two days to read the pager….still a little hard for me, but closer to kalman filter!

Thank you!

Awesome article, really enjoyed it.

Could you please explain about the ‘physical model’ behind the equations? How did you get the matrices F and B.

Thank you!

Very nice article, Lauszus. Truly amazing! Best one I’ve seen so far. Congrats!

You said that you found an error in your original code, can you specify what and where was it?

Thank you very much for this!

@bimmer

Okay. I will try with a example.

Remember that the state is given by:

We will just assume that after some samples the state is equal to:

Which means that the angle is currently 180° and the amount the gyroscope has drifted is equal to 10°/s.

Okay now assume that the output of the gyroscope is measured as 15°/s:

It’s easy to understand that it’s not 15°/s since the bias is 10°/s, so the real rate is 5°/s – this what is we are trying to achieve.

Now we will define the and

and  matrices as we did in the blog post:

matrices as we did in the blog post:

Let’s put it into the equation:

So now let’s multiply those matrices:

Now we will add them together:

The end up result will be:

Also check out step 1 for more help.

@Claudio Donaté

See step 2.

I mention it just after the C code.

Lauszus,

I hope your high school teacher gave you an A++! Extremely impressive!!! You’ll go far, I’m sure we’ll be reading about you one day soon.

@Grant Pitzer

Thank you very much. I actually got the highest grade for the assignment (we use a different grading system in Denmark) 🙂

Lauszus,

I just received an email about this TRAX AHRS System. They advertise their use of the Kalman Filter.

http://youtu.be/LEYPILpe0Dk

Keep up the good work!

Ok, I think I am missing something, but how do you obtain z (newAngle) in step 3?

Do you have to integrate the unfiltered signal or do you need to have a some other source?

Thanks.

@Vic

It’s just the angle calculated using the accelerometers. See this example sketch on how to use it: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/blob/master/IMU6DOF/MPU6050/MPU6050.ino#L70

@Lauszus

Thanks for the quick answer.

But I am not sure how this would work in a helicopter for example. Wouldn’t this only work if the accelerometer was standing still (or moving with a constant speed)?

accYangle = (atan2(accX,accZ)+PI)*RAD_TO_DEG;

accXangle = (atan2(accY,accZ)+PI)*RAD_TO_DEG;

If it was standing still it would register -vec{g} but if it was on-board a vehicle, like a helicopter acelerating, it would register -vec{g} + vec{a}.

Finally if I was design a kalman filter for accelerometer should I also use an external source like a gps or ultrasonic range sensor for z? How about if I don’t have any other sensor, just the IMU?

Thanks again!

@Vic

No it should work in a helicopter as long as you don’t start to do loops and so on.

That’s the hole point of this Kalman filter – it combines the angle obtained from the accelerometer and the rate obtained from the gyroscope to calculate the pitch and roll.

Remember that if you wan’t to use other inputs to the filter you’ll have to redefine the matrices.

When you write IMU do you mean only the accelerometer or also the gyroscope?

I mean the accelerometer + gyroscope?

@Lauszus

@Vic

The hole point of this post was to create the code to use with an accelerometer and gyroscope. It can be found at github: https://github.com/TKJElectronics/KalmanFilter as mentioned in the post.

Here is a basic example on how to use it: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/blob/master/IMU6DOF/MPU6050/MPU6050.ino

And here it is used for my balancing robot: https://github.com/TKJElectronics/BalancingRobotArduino/blob/master/BalancingRobotArduino.ino

I admire the vital in rank you place forward in your articles. I will bookmark your blog and be inflicted with my family ranking try out up at this calculate evenly. I am practically guaranteed they will gather bags of new material at this calculate than any nature moreover!

Can you explain, why one of the measurement from your system sensors goes into the “measurement” vector z, but the other measurement from your system sensors goes into the “input” vector u. Neither of these is a real control input into your system, or in the case of your balancing robot, is it ?

@mich

I can’t explain exactly why I used the gyro as the control input and the accelerometer as the measurement.

I just saw other people use that, so I decided to use it as well.

i want to know weather we can use MPU-6050-Triple Axis Accelerometer Gyro

if yes how to connect it to arduino

@dheeraj

Yes you can. Check out this example: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/blob/master/IMU6DOF/MPU6050/MPU6050.ino I wrote.

I’ll have to say this is probably one of the most easy to read kalman filter application article. Good job and keep it going! I’m just wondering whether this kalman filter will also apply to two-axis angles. So I’ll have two pairs of gyro-accelerometer fusion. Do I simply double the size of every matrix since they each pair still shares the same mechanism? Thanks in advance.

@alejandroamez

Thank you for your kind words.

So you want to use it to calculate both pitch and roll? It’s really simple just create two instances: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/blob/master/IMU6DOF/MPU6050/MPU6050.ino#L4-L5.

And then you simply use them as they are two sperate libraries: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/blob/master/IMU6DOF/MPU6050/MPU6050.ino#L70-L71.

Thank you for the explain.

Why the scketch is only for two-axis angle?

For the Z axis is the same as X and Y?

@Rafael

The problem is that you can’t measure yaw using an accelerometer, so you can’t use the combination of an accelerometer and a gyroscope to calculate the yaw you will need something like a magnetometer to do that.

Thank you for the explanation.

I would like to ask you about kalmanExample how and why to set kX.setAngle(270);. I don’t understand some kind of calibration. I am new for gyro and acc.

Thank agian.

@Thamanoon

The reason why I set the angle at startup is because the filter takes time to stabilize, so if the angle started at 0 it would take some time before it it ended up at 270. This means that the output would actually be at 180 at some point, so my balancing robot would think that it’s actually standing up causing the motors to spin when it’s actually laying on the ground – which is obviously not a good thing.

Take a look at the front page of my assignment: http://www.tkjelectronics.dk/uploads/Kalman_SRP.zip (It’s the pdf named “Kristian Lauszus SRP 2011.pdf”). The blue line is the measured voltage while the red one is the estimated value using the Kalman filter, as you can see in that case it takes about 40 samples before the filter has stabilized.

In a real situation you could then set the value to the first measurement, in this case around 300, which will reduce the time it takes the filter to stabilize.

Thank you very much for your explanation. I’m more understand, will try to test and build my own balancing robot.

Ohhh ok, I understand ;D

Another question.

Why when I modify the scketch like this:

Serial.print(“accX:”);Serial.print(accXangle);Serial.print(“t”);

Serial.print(“accY:”);Serial.print(accYangle);Serial.print(“t”);

Serial.print(“gyroX:”);Serial.print(gyroXangle);Serial.print(“t”);

Serial.print(“gyroY:”);Serial.print(gyroYangle);Serial.print(“t”);

Serial.print(“compX:”);Serial.print(compAngleX);Serial.print(“t”);

Serial.print(“compY:”);Serial.print(compAngleY); Serial.print(“t”);

Serial.print(“KalX:”);Serial.print(kalAngleX);Serial.print(“t”);

Serial.print(“KalY:”);Serial.print(kalAngleY);Serial.print(“t”);

I only introduce a Tittles, but data vary widely!!!!

Thanks!!!

@Rafael

I guess you are using this example: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/blob/master/IMU6DOF/MPU6050/MPU6050.ino, right? You are using a MPU-6050, right?

Note that you should compare accXangle, gyroXangle, compAngleX etc.

Sorry, yes I’m using MPU-6050 with this scketch.

the data of kalAngleX or Y vary more than if I don’t Introduce a Tittle…why?

Thaks for you attention

@Rafael

Are you 100% sure that this is the case, and you didn’t change anything else?

Hello Lauszus, all it’s OK, sorry. I’m the worst!!!

Hello, I’m in a project for measure angles with MPU-6050.

When I move the GY-521 a little angle in X axis …OK, but when I move a long angle (> 45º), the Y angle vary… Why?

@Afar

How are you calculating the angle?

Hi Lauszus,

I’m trying to implement this code for roll control on an RC airplane. I have it running, but it is slow to calculate theta angles for quick disturbances. Anything simple I’m missing/ way to speed up the response time? Thanks!

@Rob

You could try to “trust” the accelerometer more by lowering the the Q_angle and R_measure variables.

What IMU are you using? Please report back if you find some better values for your specific IMU, so I can share it with others 🙂

@Lauszus

Exemple 1: I put the MPU-6050 horizontally (kalAngleX = 178 º, kalAngleY â??â??= 181 º), rotating in X axis, when I put in vertical (kalAngleX = 275 º, kalAngleY â??â??= 348 º).

Exemple 2: I put the MPU-6050 horizontally (kalAngleX = 177 º, kalAngleY â??â??= 178 º), rotating in Y axis, when I put in vertical (kalAngleX = 272 º, kalAngleY â??â??= 38 º).

Don’t understand why the oposite axis values â??â??vary! They vary in the last angles… I don’t undersand something…

Thanks!

Thanks for the quick response Lauszus! We found that using only simple low pass filter with only accelerometer data was good enough for roll control. I still want to try to speed the Kalman filter up for a future controller, just haven’t had enough time recently. I’ll be sure to post back if I figure it out.

@Rob

Okay. Glad you solved your problem 🙂

Hi,May i noe hw kalman filter can be implement into Matlab coding?

@kelvin

Check out this video series: http://youtu.be/FkCT_LV9Syk where he implements a Kalman filter in Matlab.

Thanks for the link, my accelerometer data is in term of G-force and gyroscope data is in term of radian/s…Do you know how to process the data to implement into kalman filter? i am kinda lost

@kelvin

You need to convert it from g-force into degrees. Simply use atan2 like so: https://github.com/TKJElectronics/BalancingRobotArduino/blob/master/BalancingRobotArduino.ino#L362.

I don’t have much experience with Matlab, sorry.

Hi, Lauszus, do you have any idea how to implement accelerometer and gyroscope data into kalman filter?

@kelvin

See the source code: https://github.com/TKJElectronics/KalmanFilter/blob/master/Kalman.h and here is an example: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/tree/master/IMU6DOF/MPU6050

Hello. Nice work! I was testing your example code on a MPU6050 and got some strange numbers. When I tilt the sensor + x-axis for more then 90 degrees it suddenly had a large jump? and the calculated kalman angel is that in degrees? because when I compare the data from the sensor with real life movement, it’s wrong. Just trying to understand whats happening.

@Emre

What code are you using? Try the code I wrote: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/tree/master/IMU6DOF/MPU6050

I have tested you code, same thing happens there. It’s like there is something wrong with the acc or gyro, because it jumps on both axes. But the raw gyro and acc data seems to be correct if you print them. Is it some limitations? I know that you can’t roll or pitch 360 degrees but over 90 should go fine? And is the angel from the kalman filter in degrees?

@Emre

It sounds like something is wrong with your sensor. You should be able to get 360 degrees resolution just fine.

Yes the output from the Kalman filter is in degrees.

Hmm strange..I will check with another sensor.

Nice explanation! now i’m getting the hang of it.

A question though, your state matrix contains the orientation and its bias, but from what i see from the multiplication the bias part always be multplied by 0, hence in my opinion this value will not change. Can you explain more how can we get the bias from this model?

@Davidex

See my old reply: http://blog.tkjelectronics.dk/2012/09/a-practical-approach-to-kalman-filter-and-how-to-implement-it/comment-page-1/#comment-57783. I don’t multiply it by 0, where do you read that?

Hello Lauszus,

My problem is the same problem as Emre.

When I tilt the sensor for more than 90 degrees it suddenly had a large jump. Why?!

Thanks.

@Afar

Try to check your raw readings, does they look alright? But you might experiencing whats called the gimbal lock, see this comment for more information: http://blog.tkjelectronics.dk/2012/09/a-practical-approach-to-kalman-filter-and-how-to-implement-it/comment-page-1/#comment-55104.

Hi, this is a silly question probably, but in your code, why do you not include the kalman filtered angle of z? I need the z angle in my implementation and I am trying to figure out how to implement it.

@Alfred

You are talking about yaw, right? The problem is that you can’t obtain yaw using an accelerometer, so you will need a magnetometer instead to measure yaw. Simply calculate the yaw angle using the magnetometer and use it as the first argument. The second argument is the gyroscope readings in deg/s.

Hi!

This seems to work just fine for slow changes, however – if I quickly tilt my board (with both the accelerometer and the gyro on it) around just one axis, the other measured axis experiences a sudden jump in angle too, before decaying back to the actual measurement. Why is that and how to fix it?

Many thanks!

@Janis

Try to tune the filter by following the guidelines I wrote in the blog post 🙂

You properly have to trust the gyroscope or the previous value more.

@Lauszus

Thanks for the VERY quick reply! 🙂

I’m a bit embarrassed about my problem, since it was simply the case of having the gyro inputs swapped around for both axis… Not easy to spot if it goes back all the way to naming variables! 🙂

However, after fixing that, I feel that the overshoot for quicker angle changes is too big for my needs – I reckon that’s got everything to do with the Q_gyroBias variable?

@Janis

Okay 🙂 Hmm you should try lowering the R_measure value instead, by calling setRmeasure: https://github.com/TKJElectronics/KalmanFilter/blob/master/Kalman.h#L84.

Hi Kristian,

I just wanted to say thank you for posting this. I especially appreciate the great detail you have gone into explaining the separate steps which have helped me understand where I went wrong in my implementation of Kalman.

Congrats on the kickstarter project!

Cheers

@Brennon

Thank you 🙂

Hi. I’ve been trying hard to follow this and implement it on a dsPIC using a min9-imu from pololu. It appears that the pitch value just keeps on incrementing everytime the function that performs the pitch calculation is done. I have verified that my getRateX and getAngleX functions are working correctly.

@klayton

Try to convert your gyro rate into degrees to make sure you don’t have to invert the gyro axis.

Hi !Lauszus.your subject very great.I have a problem that i use your code but when compiling,it’s errors.how i fix it? thank in advance.

@kim

Please send the output and then I will have a look at it.

oh! i have a mistake that i forget add I2C.h in library arduno.now,i can run your code.sorry you,Lauszus!!!@Lauszus

Lauszus,

I bother you a little bit. Can you explain deeper about why the accelerometer can’t measure the yaw?.Actually,i don’t understand that ! thank you very much! 🙂

@kim

The accelerometer calculates the angle by measuring the gravitational acceleration – see: http://en.wikipedia.org/wiki/Standard_gravity.

Since the gravitational acceleration doesn’t change when you rotate the accelerometer along yaw, it will not be able to detect that it’s actually rotating.

Try to print out the raw values and then put the accelerometer on a table and then start to rotate it – you will see that none of the values will change (they will change a little bit due to the fact that you are applying some acceleration to the accelerometer when you rotate it).

@Lauszus

thank for your answer !.In this your sketch , do you refer to the Roll, Pitch and Yaw as the X, Y and Z axes respectively?

Thank you so much for your work !

It works very well, and it was exactly what I ‘m looking for !

Thank you !

@kim

No. X, Y and Z simply refer to the axis of the different sensors.

@Winnie

Glad to hear the library is coming to good use 🙂

hi, it is helpful me to establish a banlance robot, but I have no PC software to anylize the gyro and acc’s signal (converted to the digital), can you send me the softare to 272962111@qq.com showed in the webit, than you so much!

@Dave

I used the following code to see the data: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/tree/master/IMU6DOF/MPU6050/Graph.

Simply navigate to the root: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter and click on the button labeled “ZIP”.

Hi Kris,

Before I write a translation of your SRP 2011 Mat/El-teknik Holstebro paper. Do you know if a written English translation is available? If I do write a translation to English is it OK for me to post it on the blog/website that I am now very early in starting up?

Best,

Dale Mahan

@Dale Mahan

No there is no english version available 🙂 It would be really nice if you took the time to translate it!

Do you speak danish or are you going to use Google translate or something like that?

@Lauszus

Regrettably, I do not speak Danish. Just some German and Spanish. But, I like the way you write tutorials. I’ll use Google Translate with some sort of PDF editor. Reformatting the mathematical expressions can also be a challenge if the Danish text changes in English enough to go to the last or next line. I have already tried a couple bulk machine translators of the original document, and I am not satisfied with the badly formatted and partially untranslated results that I have “achieved” so far. I will be reading it for content and will change the Google translate format if the English structure becomes clumsy. If I have questions, I’ll ask you what you intended to say if Google translate does not do it.

@Dale Mahan

Okay great! 🙂

You can add me on Google plus or email me directly at kristianl@tkjelectronics.dk if you need me to translate some part of it.

Hi,

This was a great article, thanks!

I’m trying to develop velocity and position from gyro, accelerometer and gps. I already have the attitude from fusing the the sensors, but I need to integrate the accelerometers twice. I’m thinking of trying a Kalman filter for this but I’m getting a little lost. I’m mostly concerned with transverse (y direction) motions, but getting a full 3D trajectory (or even just 2D, x and y) would be nice. However I’m at a loss for what to use as my measured values, since I would already use the accelerations as my input. And I would suspect that the velocity and positions will be somewhat correlated, so I’m assuming that for my Q matrix I should put in some of the cross terms, but I dont really know how to tune those.

If you’ve seen anything like this before, or have any insight on how to proceed, any help would be much appreciated.

Thanks.

@Brady

Sorry for the long delay, but as you might have noticed our site have been down due to a malware script attack. I’m sorry but I have never seen anything like that, so I can’t help you out.

Hi,

I have an interesting question. Assume if the COM was exactly vertical (as measured by the IMU) then the stable angle would be Theta = 0 deg. Suppose that the center-of-mass is not exactly vertical but instead it is offset by an angle ThetaD from the vertical. Now the stable angle would be Theta = ThetaD.

Question : How to find this angle ThetaD. Since during consutrction and component placement it is very much possible that the COM is offset to one side of the body.

One way would be to feed the PID so that it drives the gyro reading to zero, but then how can we make the robot move forward?

@Lurk

I guess that you are talking about something like a balancing robot, right?

It’s pretty easy. Simply adjust the setpoint to the PID loop. In the case of the Balanduino that variable is called the “targetAngle” and this can easily be set using the Android application or via your PC.

See the following Kickstarter update: http://www.kickstarter.com/projects/tkjelectronics/balanduino-balancing-robot-kit/posts/450808 where I did excatly that.

Also check out the source code: https://github.com/TKJElectronics/Balanduino/tree/master/Firmware/Balanduino. In particula this line: https://github.com/TKJElectronics/Balanduino/blob/e754a85bdb59abe988d25c7403e8c7216d23144e/Firmware/Balanduino/Balanduino.ino#L239 and the function is calls: https://github.com/TKJElectronics/Balanduino/blob/e754a85bdb59abe988d25c7403e8c7216d23144e/Firmware/Balanduino/PID.ino.

Hi,

I would like to thank you for posting this. Now it is very to easy to understand the model.

Is it possible to implement this in matlab..?

also if i need to model a robot for its navigation using this filter model, how should I need to proceed..??

Thanks in advance.

@shan

Thanks.

Yes it is possible, but I have never had the time to actual do it. If you do, then please post your results.

I can’t help you with that, sorry.

Hi, this looks really good. We have a project which uses a 3D gyro and accelerometer to work as a digital compass. Ideally we would like this to work in 3 dimensions but having it working in the horizontal plane would be good. At present the system without any Kalman filtering drifts over time no matter how accurate the calibration is.

The project cannot use a magnetometer or digital compass due to stray magnetism from ferrous metal objects. The project manager stresses this. At present we are using a digital compass but the metal objects need to be demagnetised all the time and the accuracy is not good.

The question is, is it possible to implement a yaw sensor using a 3D accelerometer and gyro (with calibration routines)? Your professional opinion would be appreciated before we jump in to this. By the way, nice article

@FD

To get a stable and non-drifting Yaw reading you will need a proper reference sensor to even out the drifting from the Gyro.

Only with a 6-DOF sensor, as you call a 3D Gyro and Accelerometer, you will only be able to make a Yaw measurement using the Z-axis Gyroscope, as you can’t use the accelerometer around the Z-axis, as there is no gravity for this.

So to get a proper stable Yaw reading you will need to have a magnetometer or other Yaw reference sensor that doesn’t drift!

Regards Thomas

Thanks Mr.Lauszus for your explanation about the kalman filter teory. i have question about your code in github, in your code i found the ” timer = micros() ” for what you add timer on your code?. and progres gyro to degree you calculation the gyroRate with ” (double)(micros()-timer)/1000000) ” can you explain that fungtion for what?. why you multiply the gyroRate with ” (double)(micros()-timer)/1000000) “?.

thank you very much for giving tutorials.

I am very happy if you can reply my comments and send them to my email. thanks mr Lauszus

@ardiantara

Hi Ardiantara.

I will be taking the opportunity to answer for Lauszus this time.

The micros() function in Arduino returns the current time in microseconds since you turned on the microcontroller.

So by saving this timestamp in a variable (timer) and then subtracting this in the next loop, we can measure how much time has passed since last time.

And as the gyroRate corresponds to the rotational speed (degrees per second), that the gyro is moving currently, we can calculate how many degrees the gyro has been turned since last time, by using the measured time.

The reason for the division by a million, is to convert the delta microseconds into delta seconds.

Regards Thomas

thanks a lot Mr. Thomas, i have question again , is that sample rate from microcontroller every processed a loop to use for gyroRate to convert degree? why not use the sample rate from the sensor, i found the sample rate the mpu6050 from library

“* 0 | 260Hz | 0ms | 256Hz | 0.98ms | 8kHz”

i’m sorry Mr, looks like I have a lot of questions in my mind. i am confused in tune the variable in

Q_angle = 0.001;

Q_bias = 0.003;

R_measure = 0.03;

in setteing these variables, what’s the impact will get into the system?, i try to tuned the variables but the output degree did not show any difference.

thank you very much

@ardiantara

You could do that, but then you would need to setup the interrupt output on the MPU-6050 every time new data is available and then connect the pin to an external interrupt on the Arduino.

If you are reading the data in a loop as shown in the example you need to measure time yourself.

Read the post again please. It’s starts just above “The measurement ”.

”.

@Lauszus

oke Mr Lauszus, hope you will always be a superhero for people like me who are beginners in understanding the filter, thank you very much

@ardiantara

Haha thanks for the kind words 😉

Hi,

Well done on explaining the Kalman filter in way to implement practically. I have tried it and it works but I have a problem with lateral acceleration. It still detects lateral acceleration as pitch no matter how much I play with R Q and Qb. I have tried to increase R significantly. Do you have any suggestion?

Thanks

@Krishna

Thank you.

Try to implement a low pass filter before the Kalman filter and see if it helps.

@Lauszus

Hi,

Thanks for the reply but yes I have tried a low pass filter it helps a little but it slows down the response very much.

@Krishna

Okay. What application are you using it for?

@Lauszus

I am using it in a car to obtain pitch and roll.

@Krishna

Okay. You might have to use an magnetometer too if you can’t find a compromise between noise and latency.

@Lauszus

Magnetometer for pitch? I always thought they were for yaw. Ok I will look into it. Thanks for your help.

@krishna

I’m pretty sure it’s possible with a more advanced algorithm like the extended Kalman filter or a DCM algorithm. You need a gyroscope too of course.

Yes I was also thinking about extended kalman filter, but I am still wondering how the magnetometer works to measure pitch. I know it can me used for yaw as it gives heading with respect to North.

@krishna

Sorry I don’t know what I was thinking about. The magnetometer is used for yaw.

hey lauszus, man thank you very much, this article was like painkiller for me. i understand kalman much more now.

but i need a little help with the code.

when it comes to combining the bytes to doubles, i didnt understand the logic. i found an explanation in the code like this;

// Gyro format is MSB first

gyroXrate = buffer[0] << 8 | buffer[1];

………

// Accel is LSB first.

accelX = buffer[7] << 8 | buffer[6];

……..

how do i know which byte is first and what does it exactly mean?

@Lauszus

Ok no problem. So how can this problem be solved? I tried the low pass filter but I have to use something with less than 0dB gain at low frequency to make it work. This is not traditionally a LPF as it is supposed to allow everything unaltered at lower frequencies. Any ideas?

Thanks

@yusuf

First of all, sorry for the long delay!

It’s not converted to doubles, but words (16-bit).

For an explanation of MSB and LSB see the following pages: http://en.wikipedia.org/wiki/Most_significant_bit and http://en.wikipedia.org/wiki/Least_significant_bit.

@krishna

Sorry for the long response time to you too!

I think you need some more advanced algorithm. Try to google DCM or extended Kalman filter.

@Lauszus

Thanks for the help. I will look into it.

Hellow, thank you for your article. But I am a little confused with your measurement model? I wonder how you related the accelerometer reading with angle information. It seems to me that Z(k) denotes accelerometer reading, X(k) is angle information at state(k), How did you related them use H=1 by Z(k) = H*X(K)? thanks a lot!

@YANG

That is because I convert the accelerometer reading into degrees before it’s used as a input to the filter. See the following lines in the example code: https://github.com/TKJElectronics/KalmanFilter/blob/master/examples/MPU6050/MPU6050.ino#L89-L90.

Hi,

Thank you for a very good kalman tutorial.

I have questions from your example.

https://github.com/TKJElectronics/KalmanFilter/blob/master/examples/MPU6050/MPU6050.ino

1. Why you use accY for finding accXangle and accX for accYangle.(Line : 89-90)

why not use accX for accXangle and accY for accYangle ?

So your code should be.

accXangle = (atan2(accX,accZ)+PI)*RAD_TO_DEG;

accYangle = (atan2(accY,accZ)+PI)*RAD_TO_DEG;

2. Again from the link above on line 99-100.

Why you use gyroXrate to find compAngleX and gyroYrate to find compAngleY?

If we look in datasheet Acc X-axis will rotate on gyro Y-axis so we should use gyroXrate for finding compAngleY and gyroYrate for compAngleX.

Thank you.

@Beran

Hi Beran.

It’s quite interesting to read your question, as you almost answer your first question with your second.

I’ll give you some more details about the case and why you have been confused.

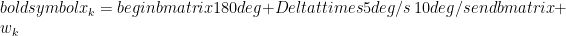

Have a look at the picture above. The 6-axis sensor, the MPU6050 fx, contains a 3-axis accelerometer that measures the acceleration i 3 different directions, X, Y and Z. In our case the acceleration is the gravity from the earth.

The other 3-axis is the gyroscope that measures the rotation around the axis. So when we start tilting the robot, the gyro values will increase and display the speed that we are rotating the robot with.

So to actually answer both of your questions, as they deal with the same confusion, look at the picture and think what happens to the values of the different axis when you tilt it around the x-axis.

By tilting the device around the x-axis, the gyroscope x-value will change and indicate the rotational speed.

The accelerometer x-axis however will stay stable, as that is still pointing out to you, as you are only tilting it around this axis.

Instead both the z-axis and y-axis values of the accelerometer will change according to the impact of the gravity on both of them.

I hope this clearified both of your questions, and you now understand why we had to chose and use the values from the axis as we did.

Kind regards

Thomas Jespersen

Hi thank you.

http://s10.postimg.org/7ekox96h5/Snap2.jpg

From MPU6050 picture in link above, if AccX (+X axis) change +10 degrees from horizontal which gyro changed?

I think Gyro-Y should be changed? so why we not use gyroYrate in line 99?.

Here is your code line 99 :

compAngleX = (0.93*(compAngleX+(gyroXrate*(double)(micros()-timer)/1000000)))+(0.07*accXangle);

Thank you again.

You code should be used with this board?

https://www.sparkfun.com/products/retired/10010

not for MPU-6050

sorry if I misunderstood.

This is a very good article and first of all ? want to thank you so much.

?m new in this topic so if my question is nonsensical please forgive me .

what is the physical meaning of P00 or p01 … ? in other words if , for example , p00 is 5 or 10 , what is the meaning of this numbers ?

thanks you.

@Beran

Please read Thomas’ explanation again. Remember that the accelerometer measures the acceleration in a certain direction (think of it as vectors), while the gyro measures the rotational speed (measured in deg/s) around an axis.

I originally posted the code for the LPR530AL, LY530ALH and ADXL335 combo, but then afterward I create a similar code for the MPU-6050. See: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/tree/master/IMU6DOF/MPU6050.

@?ükrü

Those values are part of the error covariance matrix. See the text after the headline “Predict”. It explains what it is.

hi, Lauszus, I am sorry to tell you that all pictures in your artical can’t be seen at this moment. is that caused by github parsers or some reason else?

@bow

I can see the pictures just fine. Are you still affected by the problem?

Btw they are not hosted on Github, but are written in Latex and then automatically generated by a WordPress plugin called “WP LaTeX” 😉

Hello everyone, and thanks to Lauszus for this great tutorial! I got a question:

I´m using the Microchip Motion Sense board, it has the MPU6050 and AK8975 magnetometer. Microchip also gives libraries to use this board and obtain quaternion data (in 3D). I think is possible with some operations convert this quaternion data into yaw, pitch and roll angles, to use them in a drone.

But for this dynamic systems is necessary for example a complementary or Kalman filter to obtain a good solution, and Lauszus post in a good explain of this. But i´m a bit confuse… MPU6050 can work in combination with the magnetometer and Microchip did it for this board, i ask them to get the angles and answer me to filter accel (complementary filter)… So at the end i think i need to read the gyro, accel and magnetometer and fusion in a kalman filter, maybe extended kalman???

I´ve readed many info and i think i´m a bit mixed! Also Lauszus post a link in another forum for the kalman implementation in C # but link is dead, would be great have this info for C#.

Thanks a lot!

@Andrew

You will have to use the magnetometer and gyro to estimate the yaw. Then you simply create another instance of the library and use the magnetometer angle as the first argument instead of the accelerometer.

But if you need full 3D measurement, then it would be better to use a extended Kalman filter or a DCM algorithm.

What link are you talking about?

I can´t remember now, you answered to another person something about the extended Kalman. Do you have some info about this extended version to start? Sorry but i don´t understand what you mean about the accelerometer…

Yaw -> Magnetometer and gyro.

Pitch and roll?

Thanks again Lauszus.

@Andrew

No I don’t, sorry.

Use the magnetometer and gyro for yaw and then gyro and accelerometer for pitch and roll.

https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/blob/master

/IMU6DOF/MPU6050/MPU6050.ino#L89

Is the accXangle in the link above is the angle of projection of the X on YZ plane to Z-axis?

Any images are appreciated.

Thank you.

@Smad

No the accXangle is the rotation around the x-axis. See the following reply: http://blog.tkjelectronics.dk/2012/09/a-practical-approach-to-kalman-filter-and-how-to-implement-it/#comment-431999.

Hi,

first of all, I want to thank you so much for your article. it’s really helpful.

So, that’s my question: In this example:

https://github.com/TKJElectronics/KalmanFilter/blob/master/examples/MPU6050/MPU6050.ino

You calculate accXangle and accYangle that are respectively rotation about x-axis and Y-axis. But what about accZangle? can we get it (for example) from the accelerometer output only as accZangle = (atan2(accX,accY)+PI)*RAD_TO_DEG?

thank you

@Yvan

No it is not possible to estimate yaw using an accelerometer. Remember that each axis all measure the gravitational force in their respective axis – see them as vectors.

When you rotate the IMU along the z-axis none of the axis will change, as the gravitational force doesn’t change in any of the axis.

You will need a gyro and a magnetometer to estimate yaw.

Thanks a lot for this guide Lauszus! It is incredibly well explained and the Arduino library is terrific as well! I managed to get it working with Pololu minIMU v2 even though I have almost no idea about different gyro and compass (they refer to the accelerometer and magnetometer as a compass) set-up modes – in terms of refresh rates, sensitivity and so on. I will try implementing yaw as well using the magnetometer and gyro as you’ve explained in the comments before, but because I need it for a 3D application I’ll have to try using extended Kalman. I also wanted to mention that there is sample Arduino code for Pololu minIMU that uses DCM and there is a vPython program that shows 3D real time animation of the sensor – maybe you or someone reading this might be interested.

Bottom line – AMAZING GUIDE – you belong in MIT!!!

@Peter

Glad you found my guide useful 🙂

I might look into the extended Kalman filter in the future and DCM, but I’m too busy right now to dig into it!

Haha yeah maybe. I’m actually thinking of studying abroad next year, so I’m thinking of apply for MIT and see if I can get in.

Hi! thank you for your answer i understand better now.

however i have another little problem. I tried to implement that code https://github.com/TKJElectronics/KalmanFilter/blob/master/examples/MPU6050/MPU6050.ino

it works but i get i an overshoot that i can’t disregard. for example, if i try to get 180°, i would get about 190°, then go slowly to 180°. i don’t know how i can fix it

Thanks, best regards

@Yvan

It sounds like you are trusting the measurement of the accelerometer too much.

See the following part of the guide:

Hi, Thanks for the code on GitHub, I use it for my quadcopter controller (with a MPU 6050). I have got one question though. On this file: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/blob/master/IMU6DOF/MPU6050/MPU6050.ino

You set Gyro Full Scale Range to ±250deg/s. And after you write:

double gyroXrate = (double)gyroX/131.0;

I don’t see where that “131.0” comes from?

I mean it works but I’d like to understand why 🙂

Thanks, Romain

@Romain

See the Register Map datasheet: http://invensense.com/mems/gyro/documents/RM-MPU-6000A-00v4.2.pdf page 14 you can see that by writing a 0 to the FS_SEL register the range is set to ±250deg/s.

After that go see the Product Specification: http://invensense.com/mems/gyro/documents/PS-MPU-6000A-00v3.4.pdf at page 12 – there you will see if the FS_SEL register is set to 0, then sensivity is: 131 LSB/(º/s) 😉

Just a thanks for taking the time to explain this. I hope I can use some of the knowledge when I try to build a robot.

@Graham Stott

You are welcome 🙂

Thank Lauszus so much, it’s helpful for me. You’re very nice.

Firstly thanks for this great sharing!;)

I work on implementing a platform which will obtain data from quadrotor for system identification. I think about using 6 dof imu(accelerometer+gyroscope) with kalman filter to estimate quadrotor’s state. Do you think that only accelerometer and gyroscope are enough for estimating quadrotor state?

Thank you Lauszus for this wonderful tutorial. I ve been reading a bunch of papers but couldnt understand them as they assumed that I know most of the maths. Your step by step guide and the links provided were exactly what I needed.

Just a quick question, I intend to create a program which uses an accelerometer, gyro and magnetometer to get the orientation, plus maybe another reading of the roll and pitch. From the comments above I was led to believe that for such a situation I need to use an extended Kalman filter. Is that right? If so, can you please point me to some good tutorials for it.

@juve

Yes accelerometer and gyro should be enough. For instance the very popular KK2.x board use just that: http://www.hobbyking.com/hobbyking/store/uh_viewitem.asp?idproduct=49254.

@Jeremy Borg

Yes it you need to estimate 3D orientation I recommend trying some sort of DCM or extended Kalman filter, as this is best for only one axis – like I use for the Balanduino: http://www.kickstarter.com/projects/tkjelectronics/balanduino-balancing-robot-kit.

I do not know any good tutorials out there, but there are many open source flight controller for multirotors, you could just use the algorithm from one of them.

Some thoughts:

1. Even with a PERFECT gyro signal, PID control is not sufficient.

http://ctms.engin.umich.edu/CTMS/index.phpexample=InvertedPendulum§ion=ControlRootLocus

2. A controller with positive feedback is necessary.

http://web.mit.edu/klund/www/papers/UNP_pendulum.pdf

3, Your accelerometer is actually part of a positive feedback loop within the control. Other solutions which do the same are using wheel encoders for positive feedback or doing it all within the controller itself which requires neither an accelerometer nor wheel encoders.

You really do not need Kalman filtering.

@CharlieSixPack

Sorry, but the first link is dead?

I’m sorry, but I you referring to the Balanduino? Or something else?

I use the Kalman filter in combination with the encoders to balance my robot.

You can find the relevant code here: https://github.com/TKJElectronics/Balanduino/blob/master/Firmware/Balanduino/Motor.ino.

Thank you very much for a very well detailed article on Kalman Filters. You not only understand the topic very well but are able to explain it very clearly!

Hi, thanks for your magical written codes. Im using mikroC for DsPic and Pololu MinImu 9v2 as a sensor. mikroC has not micros() function. What i can write? Can i use this code instead micros() and timer? gyroYangle increasing constantly in this way.

int lastTime=0, firstTime=0;

void(){

while(1){

lastTime++;

//Codes…

gyroYrate = -(gyroY/8.75);

gyroYangle += gyroYrate*(lastTime-firstTime);

// More other codes.. }}

I think 8.75 correct coefficient for 250dps. Gyro Datasheet: http://www.pololu.com/file/0J563/L3GD20.pdf

Hi,

this is really a good piece of work that you have done. I ma also using MPU-6050 in my project that is a bit different than yours, and hope you can help me out.

I am trying to find longitudinal velocity of the vehicle using the accelerometer data. i was able to access DMP data to get the real world acceleration (gravity and orientation compensated) and the next step is to extract velocity form acceleration. I tried doing simple integration on the data but it drifts over time. I’ve done this in my code:-

If (accX > Threshold and absolute value)

{

velocity = velocity + dT*accX;

}

else

velocity = 0;

And then i saw your post on the kalman filter that sounds perfect to apply on this type of application. So, here’s the problem i am not sure about all the matrices that i/ve designed for the same. could you please have a look on them and let me know if i am on the right path.

F = [1 dT; 0 1]

H = [1 0]

X = [0 ; 0]

U = [0 ; 0]

P = [10 0 ; 0 1]

R = [1]

I guess something is wrong with my equation that?s why I am not getting the results that I want. Help will be really appreciated.

Thank you.

@Peo Neak

No you should need the sample time in seconds between each reading or you won’t be able to calculate the angle.

@Alex

That is really really hard to do – just Google it. You are not the first!

I recommend using some other kind of sensor like encoders. You will never be able to get proper measurements from the accelerometer – or it will atleast be very very hard to get precise measurements.

@Lauszus

Thanks for your reply. yea, i’ve already looked into it on google and reviewed some papers. it is hard i know. but, i really need to perform it without encoders and for that i have bought a GPS module to correct the accelerometer data every second. would you please help me in designing the state equations for the integration purpose (GPS + INS).

Thank you.

@Alex

I will not be able to do that, sorry.

@Alex

Here is a report I did implementing a Kalman Filter using the accelerometer as control input and GPS velocity and position as independent measurements.

@Dave

Thanks for sharing Dave!

Hi, thanks for the post.

I’m using the TI SensorTag to get accelerometer and gyroscope data to develop an app that can rotate a square in 3d space using that data but I’m having trouble computing the quaternion values. I need the quaternion values in order to display rotation and movment of the SensorTag device in my app. Do you know how to compute the quaternion values from the accelerometer and gyroscope data? Thanks -chris

@chris

You should check out the following site: http://www.x-io.co.uk/open-source-imu-and-ahrs-algorithms/. I have not used it myself, so unfortunately I can not help you with it.

This is a great article!!!

I have some questions about your project

(1) Because accelerometers contains the gravity and the acceleration caused by motions,it means that accelerometer will not only detect gravitational acceleration!

how did you remove the acceleration caused by motions from body frame.

(2) What rotations sequence are you choice?

(The 3 ? 2 ? 1 Euler angles are one of the most widely used parameterisations of

rotations)

(3)If you choice 3-2-1 euler angles rotations sequence,I think that your “accXangle” & “accYangle”

have problem.

It should be ? roll: accXangle=(atan2(accY, accZ) + PI) * RAD_TO_DEG;

pitch:accYangle = (atan2(-accX, squr((accZ*accZ)+(accY*accY)) + PI) * RAD_TO_DEG;

here is the equation derive:

http://avalon.tilab.com/pipermail/spine-dev/attachments/20090507/cb8e678e/getPDF-0001.pdf

http://ppt.cc/nHYQ

thanks!!

hello lauszus

Very nice article.

This may be a stupid question – I am not a programmer – but I need to know so I will ask anyway.

You write in the Github:

“This is a Kalman filter library for any microcontroller that supports float math”

Can you please tell me if I can use an Atmega 8-bit microcontroller with this filter

(Atmega16, Atmega32 or Atmega2560)?

Also, does Arduino support floating point math?

Thanks!

joseph

Plz Plz Plz HELP ME

Thanks to you finally i found a code on kalman filter.

I am currently working on my project quadrotor. I am using ADXL335 accelerometer and L3G4200D gyroscope interfaced with an atemga 128. When I check reading from accelerometer without running motors, values are accurate and stable. But when I start motors, values start to fluctuate. The more I increase the speed the more they fluctuate. I tried you code for Kalman the results seem same just less fluctuating. My gyroscope readings also give too much drift. Is this suppose to happen or am I doing something wrong.

@eric

1. That is the point of the Kalman filter. It filter out the noise.

2 and 3. You are absolutely correct. I have actually modified the example some time ago, but still need to clean it up a bit before I push it! 🙂

You should also take a look at this app-note: http://www.freescale.com/files/sensors/doc/app_note/AN3461.pdf.

@joseph

Yes they all supports the float math 🙂

@Zaki

It is caused by vibrations! Try to put some foam between the IMU and the frame – that is what I use myself.

Hello This is a very good info. I have built a segway using code that was posted online. The problem is it was using analog imu . Now it is not found. So can you please figure out a way to adjust the code to work with digital imu? it would be great help. I am willing to pay for it. The code i Have used is this

http://prototyperobotics.com/projects/the-seg-bot

@Miral

You should take a look at the newest code for the Balanduino here: https://github.com/TKJElectronics/Balanduino. It uses a digital MPU-6050 which is a combined 3-axis accelerometer and 3-axis gyroscope.

Hi Lauszus,

I feel the same that Zaki. When the engines are stopped, everything works fine, but when the motors start, the vibrations cease all useless, with disparate readings. What parameter can change or adjust and in what range in the kalman filter to try to improve the result?

Thanks for your time and for this wonderful code . 😉

I think I’m close to getting it to work.

Excuse my poor english.

@Ivan

You should make sure that you have no vibrations as I wrote to Zaki. Also you properly want to set the range to +-2000 deg/s for the gyro and +-8g for the accelerometer.

Also you can experiment with the LPF (Low pass filter) setting on the MPU-6050 – look in the datasheet for register 0x1A.

@Lauszus

Thanks for answering so fast.

I will try to isolate the vibration plate.

Where I set the range for the gyro and acc?

My accelerometers are ADXL335. And the gyros are InvenSense analog ones…

Tks for your time.

@Ivan

Unfortunatly you can not adjust the range on analog sensors.

@Lauszus

tks again Lauszus,

Would help change Q_angle, Q_gyro and R_angle variables? In that range can be moved?

@Ivan

Yes it might help to change the values. No the range will not be moved – the range has nothing to do with the Kalman filter.

@Lauszus

I mean between values ??I can change those variables … Between 0 and 1?

Q_angle = 0.001; // Between 0.001 and 0.01?

Q_bias = 0.003; //Between 0.001 and 0.01?

R_measure = 0.03; // Between 0.01 and 0.1?

@Ivan

No it is not simple as that. Please read the post for an explanation on how to tune them.

ok. Tks for all.

I have read the post, but do not understand wery well .If i get it to work, I will tell you the results.

@Lauszus hello firstly congratulations for you robot, i’m working in a proyect like yours, but i have a problem, how i can make the control of the moving without interfering with the balancing control? How do you manage to do that? i have a LQR control and the simulate is good but the real life is more difficult XD

@Alejandro

Here is how I do in the Balanduino code: https://github.com/TKJElectronics/Balanduino/blob/master/Firmware/Balanduino/Motor.ino.

Let me know if you want be to explain some of it 😉

Hi again Lauszus, after doing some tests, I found that the vibrations are not the problem. If I turn on the motor of my airplane, and its satatic on the ground, yAngle and xAngle mark the pitch and roll angles correctly. The problem starts when the airplane moves, and come into play centripetal and centrifugal forces. Then the readings go crazy and make Yangle and xAngle false angles. I understood that the combination of accelerometers and gyroscopes and corrected kalman filter these forces … the code is not ready for this? Thank you very much for your time, and, one more time, excuse my poor english.

i use the LPR530AL_LY530ALH_ADXL335 code…

@Ivan

You should take a look at the code for the MPU-6050: https://github.com/TKJElectronics/Example-Sketch-for-IMU-including-Kalman-filter/tree/master/IMU6DOF/MPU6050. I haven’t had time to update the others yet, but it is pretty low priority on my ever growing list 😉

It is most likely happening because your accelerometer values gets screwed up since I tuned the Kalman filter for my balancing robot and not for something like an airplane which is exposed to much larger g-forces than a balancing robot.

You should also try to implement a low pass filter on the values. I do this for the Balanduino using the build in filter in the MPU-6050: https://github.com/TKJElectronics/Balanduino/blob/ffbabd8e597393d51f321b94e4fe55a5c2c67d32/Firmware/Balanduino/Balanduino.ino#L220-L223.

Your english is fine 😉

thanks for the reply. I see the code you indicate me is totally different than what I used. I understand better with analog sensors. 🙁

What are the changes you say you did to adjust the kalman filter to your robot? You can freshened a little?

While I’ll read the links you indicate me and also the arduino forum post, i came to page 12 … 🙂

Thanks for your help.

@Ivan ” one more time. This explains how to adjust the values 😉

” one more time. This explains how to adjust the values 😉

Please read the section “The measurement

I read there that is need to adjust the values ??of variance. it Refers to Q_angle, Q_bias and R_measure variables? But, these variables do not affect what you told me to tune the data to adjust the filter for the small g forces that supports your robot or the bigs one that is supporting my airplane… or affects? tks for your time.

and thanks for your patience …

@Ivan

Yes they do. For instance try to increase R_measure and it should trust new measurements less.

Thanks for the reply. I can implement a potentiometer to vary the value of R_measure in real-time … you give me an approximation of values ??should be between R_measure?

@Ivan

No I can not sorry. You will have to experiment and see.

ok. tks.

@Lauszus

Thanks for the great tutorial. I am trying to implement kalman to determine the yaw rotation of a body. will i have to use 2 separate filters? 1 for the pitch and roll with accelerometer and gyro and 1 with a magnetometer and gyro for yaw?

also say I have another method for measuring the pitch and roll, is it possible to incorporate this measurement into the equations in order to get better estimates?

@Jeremy

You will have to an instance for each axis. For instance if you want both pitch, roll and yaw then you would need three instances.

The three instances are totally independent, so if you only need it for yaw, simply just create one.

You will need to estimate pitch and roll in order to calculate yaw using the magnetometer. Please read: http://www.freescale.com/files/sensors/doc/app_note/AN4248.pdf. The equation you should use is Eqn. 22 at page 7.

@Lauszus Thank you. Do you have any pointers for my second question please? basically I have another sensor which can detect the pitch and roll, how can I incorporate it with the filter?

Thank you in advance

hello,

please help me,

im doing a project about line follower based on image processing that use kalman filter as the algorithm..

im use arduino board and cmucam4.

have any of u know how to coding this kind of algorithm..?

tq

@pearyunk

No unfortunately I don’t have any experience with that.

Thanks for the writeup…awesome! Two quick conceptual questions for learning purposes, tying into what you’ve shown above.

1) It seems you chose the output state z to be an angle (and not a rate) so that you could set z = AccelData in the innovation step, only because you had an accelerometer to fuse.

If this were a gyro-only filter, would you still put your gyro input to the u vector per above — or would you change z to be a rate output instead, and input your gyro data into z?

Actually why not have both accel angle and gyro rate in z and fuse both gyro and accel data in there at the same time, instead of gyro in u?

2) Along those lines, how did you personally decide what sensor data goes into u, and what into z? Why not flip and have accel be in u, and gyro be in z instead? Let’s say this is an IMU application. I thought u was the active control input, not the sensor reading, or does it not matter in this case?

Again, excellent and clear writeup, thanks!

hi Lauszus,

its correct this code to change the gyro and acc range to 2000 deg/s and +-8g ?

i2cData[0] = 7; // Set the sample rate to 1000Hz – 8kHz/(7+1) = 1000Hz