Vision-based emergency landing system for indoor drones (using FPGA)

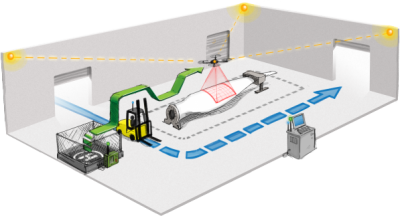

At 4th semester of my bachelor at Aalborg University me and my project partner became a part of a new research project, UAWorld (DRONER RYKKER INDENDØRS MED DANSK TEKNOLOGI). A project aiming for developing a new infrastructure and a set of drones capable of being used in indoor industrial environments with dynamically changing obstacles (and layout) and human beings likely to walk around. The drones within the project is intended to carry assembly line goods around an assembly line hall into a warehouse where it will be autonomously offloaded.

The main research group within the project had already taken several decisions regarding the drone typology, which indoor positioning system to use and which wireless communication to use. But being dependent on these systems (positioning and wireless link) to reliably navigate a mission critical environment, making sure that the drone would never drop the goods or crash into human beings even at emergency situations, is just as an important task as making the quadcopter navigate safely.

For download links to the report and source code, please scroll to the bottom of the post. Further videos of the project undergoing development can also be found in the bottom of the post.

Introduction

Me and my project partner, Christian Quist Nielsen, was assigned to the research project to develop a local system on the drone which could stabilize the drone in the case of emergency with lost wireless connection and/or lost positioning data, and if necessary do a controlled landing while still holding the position.

The theme of the 4th semester on Electronic Systems (EIT) at Aalborg University is Digital Design, hence we decided to take up the challenge and develop a vision-based emergency system for the drone which could be installed locally onto it. By using a video stream from a camera and markers in the indoor environment, an object tracking algorithm could detect any movement in the position of the drone and would take over the control of the drone to counteract these movements in case of an emergency.

After a short analysis of the problem and the emergency control strategy we concluded that it would be necessary to point the locally installed camera towards the ceiling of the building, as pointing it downwards would result in countermovement causing the tracked object to move outside of the FOV of the camera.

As the 4th semester should include some kind of digital design and also to make sure that the emergency system would be reliable and always functioning, we decided that instead of using an existing Embedded Linux device (such as a Raspberry Pi) capable of running the OpenCV library but with the potential of software bugs and crashing (not that our resulting design has been proven better), we would design our own FPGA based camera control system to be attached on an existing drone.

To be able to carry out any real-world prototype tests we decided to use a small 250mm sized quadcopter (frame by HobbyKing) equipped with a Pixhawk flight controller.

For the FPGA we decided to use a Spartan-6 in terms of the XULA2 board as we initially started out testing our image processing algorithms on a Spartan-3E which ended up becoming very hot and lacking speed.

To the FPGA we connected an OV5642 camera, a configurable 5 megapixel camera whose image output we scaled down to 640 x 480 due to timing constraints.

Project overview

The objective of the FPGA image processing was to identify and detect a green marker in the ceiling and to determine the center position of the marker in pixels. The input stream to the image processing would solely come from the scaled image feed from the camera mounted on the quadcopter pointing towards the ceiling.

At first a low-level analysis of regular image processing algorithms and techniques had to be investigated to distinguish complexity and benefits when keeping in mind that everything had to be implemented from scratch in VHDL.

Soon it was clear that we had to do some color-based filtering and segmentation to allow a bounding box to determine the center position of the ceiling marker. This determined center position would be passed on to a Picoblaze microprocessor inside the FPGA programmed in assembly. This Picoblaze would handle all the communication with the Pixhawk by simply passing thru or overruling the incoming DSM2 signal from the wireless receiver (used for demonstration purposes only). The result was a modular design consisting of three main blocks: Camera, Image Processing and MCU.

When a new frame is ready from the camera the ordered list of implemented image processing steps is shown in the list below:

- Camera stream decoding

- RGB to HSV conversion

- Color segmentation (filtering)

- Morphological transformations (erosion and dilation)

- Bounding box determination

- Center position estimation

The implementation and results of each individual step is briefly described below. For further details please refer to the project report and source code found in the bottom of the post.

Camera stream decoding

After the camera has been configured using an SCCB interface (I2C) to yield the required image size and refresh rate pixel data is streamed out of the camera constantly. The configuration register settings can be found in the project material.

Pixel data arrives from the OV5642 camera in a stream, clocked by a Pixel clock and synchronized by a HREF and VSYNC line. The camera only contains an 8-bit data line but due a requirement in using the RGB565 color format to get at least 16-bit colors, a single pixel would result in two pixel clocks. The first step in decoding the camera stream consists in merging the data from the two pixel clocks into one without losing track of the MSB and LSB order. This is done using a series of clocked latches.

To test the developed image processing and to follow the progress of the project while still working in parallel with the development of the Pixhawk controller integration, we decided initially to use a Spartan-3 development board for the implementation and testing of the camera system and image processing. We equipped the development board with a VGA port which thereby allowed us to output the received video feed and each individual processed version.

From the arrival of the pixel stream to the morphologically filtered stream the pixel stream undergoes three conversions, taking the 16-bit stream down into a 1-bit stream. Notice that the STREAM_CLOCK here is a downsampled version of the PCLK as this combines the two 8-bit pixel data clocks from the camera into one.

RGB to HSV conversion

The first image processing step is two convert the RGB pixel stream into an HSV (hue, saturation and value) stream. Identifying colors in an RGB stream can be quite tedious as the actual RGB color values are affected a lot by the current lighting and contrast. Instead it is much easier to detect colored objects by transforming the RGB pixels into HSV pixels as the Hue value is an exact measure of the color value of the current pixel given as a 360-degree value.

The math behind an HSV-conversion is given by the equations below.

On the FPGA these conversion steps is implemented with the VHDL code shown below. Please take into consideration that due to the necessity of the binary implementation in VHDL the color circle (hue) has been normalized to 384 degrees instead of 360.

begin

if RESET = '1' then

HUE_OUT <= ( others => '0');

SATURATION_OUT <= ( others => '0');

VALUE_OUT <= ( others => '0');

elsif rising_edge ( STREAM_CLOCK ) then

HUE_OUT <= std_logic_vector (Hue (8 downto 0));

SATURATION_OUT <= std_logic_vector ( Saturation );

VALUE_OUT <= std_logic_vector (MAX) & "00";

end if;

end process ;

RED <= unsigned ( RGB565_IN (15 downto 11) & "0");

GREEN <= unsigned ( RGB565_IN (10 downto 5));

BLUE <= unsigned ( RGB565_IN (4 downto 0) & "0");

MIN <= RED when (( RED < GREEN or RED = GREEN ) and

(RED < BLUE or RED = BLUE )) else

GREEN when (( GREEN < RED or GREEN = RED) and

( GREEN < BLUE or GREEN = BLUE )) else

BLUE ;

MAX <= RED when (( RED > GREEN or RED = GREEN ) and

(RED > BLUE or RED = BLUE )) else

GREEN when (( GREEN > RED or GREEN = RED) and

( GREEN > BLUE or GREEN = BLUE )) else

BLUE ;

MaxSelector <= "00" when (((RED+1) = GREEN ) and ((BLUE+1) = GREEN )) else

"01" when (( RED > GREEN or RED = GREEN ) and (RED > BLUE or RED = BLUE )) else

"10" when (( GREEN > RED or GREEN = RED) and ( GREEN > BLUE or GREEN = BLUE )) else

"11" when (( BLUE > RED or BLUE = RED) and ( BLUE > GREEN or BLUE = GREEN )) else

"00";

Saturation_Difference <= ( MAX - MIN ) when MAX > 0 else ( others => '0');

Saturation_Top <= Saturation_Difference & "00000000"; -- *256

Saturation_Bottom <= "00000000" & MAX ;

Saturation_Temp <= divide ( Saturation_Top , Saturation_Bottom ) when MAX > 0 else ( others => '0');

Saturation <= Saturation_Temp (7 downto 0) when

Saturation_Temp < 256 else ( others => '1');

HueTop_NOSIGN <= ( GREEN - BLUE ) when ( MaxSelector = "01" and ( GREEN > BLUE or GREEN = BLUE )) else

( BLUE - GREEN ) when ( MaxSelector = "01" and GREEN < BLUE ) else

( BLUE - RED ) when ( MaxSelector = "10" and ( BLUE > RED or BLUE = RED)) else

( RED - BLUE ) when ( MaxSelector = "10" and BLUE < RED) else

( RED - GREEN ) when ( MaxSelector = "11" and (RED > GREEN or RED = GREEN )) else

( GREEN - RED ) when ( MaxSelector = "11" and RED < GREEN ) else

( others => '0');

HueTop_SignBit <= '0' when ( MaxSelector = "01" and GREEN > BLUE ) else

'1' when ( MaxSelector = "01" and GREEN < BLUE ) else

'0' when ( MaxSelector = "10" and BLUE > RED) else

'1' when ( MaxSelector = "10" and BLUE < RED) else

'0' when ( MaxSelector = "11" and RED > GREEN ) else

'1' when ( MaxSelector = "11" and RED < GREEN ) else

'0';

Divisor <= "000000" & (MAX - MIN) when ( MaxSelector = "01") else

"000000" & (MAX - MIN ) when ( MaxSelector = "10") else

"000000" & (MAX - MIN ) when ( MaxSelector = "11") else

( others => '0');

HueTop_Multiplicated <= HueTop_NOSIGN & "000000";

Division_Result <= divide ( HueTop_Multiplicated , Divisor ) when ( Divisor > 0) else ( others => '0');

Hue <= ( others => '0') when ( MaxSelector = "00") else

-- Roed er stoerst

Division_Result when ( MaxSelector = "01" and HueTop_SignBit = '0' and Division_Result < 384) else

(384 - Division_Result ) when ( MaxSelector = "01" and HueTop_SignBit = '1' and Division_Result < 384) else

( Division_Result - 384) when ( MaxSelector = "01" and HueTop_SignBit = '0' and ( Division_Result > 384 or Division_Result = 384) ) else

(768 - Division_Result ) when ( MaxSelector = "01" and HueTop_SignBit = '1' and ( Division_Result > 384 or Division_Result = 384) ) else

-- Groen er stoerst

( Division_Result + 128) when ( MaxSelector = "10" and HueTop_SignBit = '0') else

(128 - Division_Result ) when ( MaxSelector = "10" and HueTop_SignBit = '1') else

-- Blaa er stoerst

( Division_Result + 256) when ( MaxSelector = "11" and HueTop_SignBit = '0') else

(256 - Division_Result ) when ( MaxSelector = "11" and HueTop_SignBit = '1') else

( others => '0');

Color segmentation (filtering)

After the conversion into the HSV format the pixel data can be segmented according to some search constraints. Knowing that the marker in the ceiling will be green in color and shining quite bright, it is possible to program a binary segmentation that will check the current HSV pixel data and compare to a minimum and maximum set of requirements for the pixel to be a potential valid marker pixel.

In the pictures below a segmentation of the red tomatoes has been implemented. Notice how the red parts of the image transforms into a set of white pixels in the black/white image (1-bit segmented image).

The VHDL code to do this stream-type of pixel color segmentation is shown below.

begin

if RESET = '1' then

STREAM_HREF_OUT <= '0';

STREAM_VSYNC_OUT <= '0';

STREAM_PIXEL_OUT <= '0';

elsif rising_edge ( STREAM_CLOCK ) then -- latch udgangene

STREAM_HREF_OUT <= STREAM_HREF_IN ;

STREAM_VSYNC_OUT <= STREAM_VSYNC_IN ;

STREAM_PIXEL_OUT <= SegmentedBit ;

end if;

end process ;

SegmentedBit <= '1' when (

unsigned (HUE) > to_unsigned ( HUE_MIN , 9) and

unsigned (HUE) < to_unsigned ( HUE_MAX , 9) and

unsigned ( SATURATION ) > to_unsigned ( SAT_MIN , 8) and

unsigned ( VALUE) > to_unsigned ( VAL_MIN , 8)

) else '0';

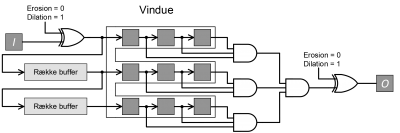

Inside the FPGA the combined HSV conversion and color segmentation thereby results in the logic diagram shown below, passing on a stream of segmented pixel to the morphological transformations.

Morphological transformations (erosion and dilation)

Unfortunately when an image is actually taken noise will be included and within an environment with changing light conditions the segmented image will not necessarily result in a clean marker. Noisy random pixels around the image will disturb the later bounding box determination why these have to be removed.

The most complicated part of the image processing is to get a clean identifiable binary object. To filter the segmented image a set of morphological transformations are used.

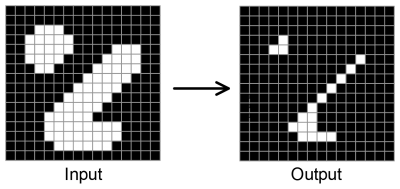

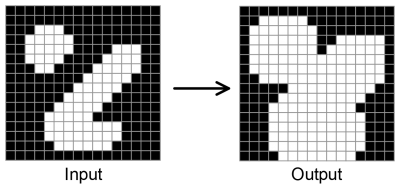

Mathematically speaking morphological transformations are a set of binary ?and? and ?or? operators using a kernel to define how to modify the pixels of an image. A kernel is a small binary sub-image, usually 3×3, 5×5 or similar, that defines how the transformation is applied to the input image. The base of morphological transformations are the erosion and dilation operation.

These operations does either erode pixels away from an image or dilate holes in an image. Depending on the size and form of the kernel the erosion and dilation will be more or less aggressive. Eroding with large kernels results in larger parts of the image (generating bigger holes) being eroded away but it will also be able to remove larger noise elements. Dilating with large kernels results in small holes being filled out with big pixel areas, thereby also allowing the filling and repair of larger noise holes in an object. In the pictures below a fully filled (all ones) 3×3 kernel has been used to apply an erosion or dilation to the input image.

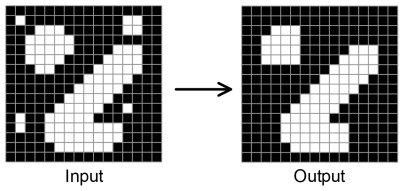

To yield even better results the erosion and dilation transformation can be combined into an opening or closing transformation.

The closing transformation does as the name implies, it closes holes in an image without affecting the overall image and its? edges too much.

The opening transformation does on the other hand help by removing smaller particles in an image yet again without affecting the edges too much.

Using a combined series of first closing and thereafter opening the image results in clean and usable results. The result of using multiple transformations over only one is shown below using the noisy image of the green marker.

The total image processing steps before the bounding box determination can thereby be summarized into the modular steps shown below.

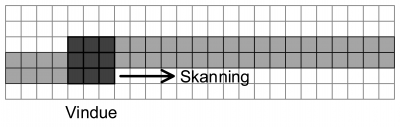

Implementing this series of morphological transformations inside the FPGA requires some rethinking of the mathematical operation as the pixel data is only being sent as a stream and would have to be filtered as a stream as shown in the image below.

Due to the stream implementation it is not possible to operate on a full image but luckily the morphological transformation only operates on a defined set of pixels given by the kernel why a so-called scanning-window implementation can be used.

By storing only a few rows of pixel data (marked as light grey), defined by the height of the kernel (marked as dark grey), the mathematical ?and? or ?or? operations of the specific morphological transformation can be performed on the actual pixels.

Using a FIFO approach allows the pixel data stream to constantly supply new pixels to the transformation and for each new pixel the transformation will also constantly supply filtered pixel data from the center of the scanning window. This is implemented on the FPGA using the code shown below:

signal LineBuffer2 : std_logic_vector (639 downto 0);

signal WindowRow1 : std_logic_vector (2 downto 0);

signal WindowRow2 : std_logic_vector (2 downto 0);

signal WindowRow3 : std_logic_vector (2 downto 0);

------------------------------------------------

if rising_edge ( STREAM_CLOCK ) then

if STREAM_HREF_IN = '1' and STREAM_VSYNC_IN = '1' then

-- Skift vinduets skifteregistre

WindowRow1 <= WindowRow1 (1 downto 0) & LineBuffer1 (639) ;

WindowRow2 <= WindowRow2 (1 downto 0) & LineBuffer2 (639) ;

WindowRow3 <= WindowRow3 (1 downto 0) & STREAM_PIXEL_IN ;

-- Skift ind i de to raekke buffere

LineBuffer1 <= LineBuffer1 (638 downto 0) & LineBuffer2 (639) ;

LineBuffer2 <= LineBuffer2 (638 downto 0) & STREAM_PIXEL_IN ;

end if;

end if;

The erosion and dilation transformations could be implemented on the FPGA in a modular scheme for easy reuse (instantiation). As the transformations are simply the inverse of each other the difference between them can be summarized into an XOR on the input and output.

But to keep the the VHDL code below shows the implementation of the two morphological transformations separately.

For the erosion this is implemented using the code shown below:

-- First row of the window

(( WindowRow1 (2) and ELEMENT (0) (2) ) or (NOT ELEMENT (0) (2)))

and

(( WindowRow1 (1) and ELEMENT (0) (1) ) or (NOT ELEMENT (0) (1)))

and

(( WindowRow1 (0) and ELEMENT (0) (0) ) or (NOT ELEMENT (0) (0)))

and

-- Second row of the window

(( WindowRow2 (2) and ELEMENT (1) (2) ) or (NOT ELEMENT (1) (2)))

and

(( WindowRow2 (1) and ELEMENT (1) (1) ) or (NOT ELEMENT (1) (1)))

and

(( WindowRow2 (0) and ELEMENT (1) (0) ) or (NOT ELEMENT (1) (0)))

and

-- Third row of the window

(( WindowRow3 (2) and ELEMENT (2) (2) ) or (NOT ELEMENT (2) (2)))

and

(( WindowRow3 (1) and ELEMENT (2) (1) ) or (NOT ELEMENT (2) (1)))

and

(( WindowRow3 (0) and ELEMENT (2) (0) ) or (NOT ELEMENT (2) (0)))

);

And for the dilation:

-- First row of the window

( WindowRow1 (2) and STRUCTURAL_ELEMENT (0) (2)) or

( WindowRow1 (1) and STRUCTURAL_ELEMENT (0) (1)) or

( WindowRow1 (0) and STRUCTURAL_ELEMENT (0) (0)) or

-- Second row of the window

( WindowRow2 (2) and STRUCTURAL_ELEMENT (1) (2)) or

( WindowRow2 (1) and STRUCTURAL_ELEMENT (1) (1)) or

( WindowRow2 (0) and STRUCTURAL_ELEMENT (1) (0)) or

-- Third row of the window

( WindowRow3 (2) and STRUCTURAL_ELEMENT (2) (2)) or

( WindowRow3 (1) and STRUCTURAL_ELEMENT (2) (1)) or

( WindowRow3 (0) and STRUCTURAL_ELEMENT (2) (0))

);

Bounding box and center position determination

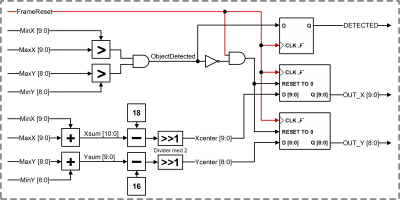

With the cleaned image containing one solid marker, the position of this has to be determined. This is done by calculating a bounding box around the marker. A bounding box is two sets of coordinates that identifies the upper left corner and lower right corner of the detected marker.

As the pixel data comes in a stream it is not possible to just look at the complete segmented and filtered image to determine a bounding box around the dilated marker. The bounding box algorithm has to be implemented in a stream-based fashion. This is done by constantly keeping track of the current position of the stream pixel and resetting this at every new frame.

Whenever an identified marker pixel arrives two sets of bounding box position counters are updated to reflect the outermost positions of the marker pixels. This is illustrated in the figures below.

The implementation is separated into three logic parts: the position generator, the bounding box generator and a reset generator. The position generator counts every arriving pixel and rolls over at every new line to keep track of both the x- and y- coordinate of the current stream pixel. The bounding box generator increases the two sets of bounding box coordinates whenever a marker pixel arrives. The reset generator keeps track of the VSYNC line to reset all counters whenever a new frame starts. The bounding box implementation is shown in the logic diagram below.

Finally the bounding box positions are used to calculate the center position of the detected marker whenever a full frame has been received. The implementation is shown in the logic diagram below.

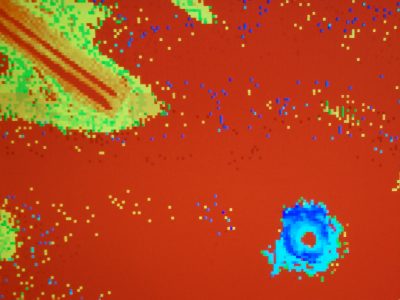

Image processing results

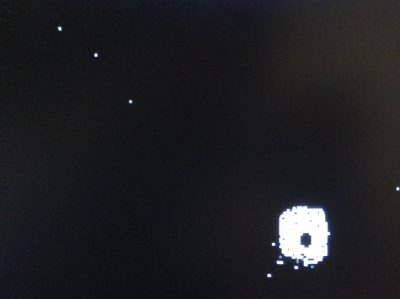

All implementations have been simulated using the built in Xilinx ISim tool to confirm that the logic behaved as desired. Furthermore a VGA screen has been used to debug the actual implementation on the FPGA where the pictures below shows the output of the individual image processing steps with the following input image. OBS. In this case the marker is blue colored.

The first step is the HSV conversion whose output is shown below.

Notice all the blue pixels around the image due to noise. When segmenting this image the marker is not clearly identifiable due to noise pixels.

Therefore the morphological opening transformation is first applied yielding the output as shown below.

Followed by a morphological closing transformation yielding the output below.

Finally this marker can be identified and positioned by the bounding box implementation resulting in a center position of the marker as shown below.

The steps can also be seen in the video below where the steps are also explained in Danish.

Other videos (in danish)

Below a video gives a general overview and description of the hardware platform and shows how the implemented system affects the Pixhawk control inputs when the emergency system is engaged.

The system can also be used to let the quadcopter move from marker to marker by using LED controlled lighting markers. In the video below the position of the quadcopter is controlled by changing the position of the marker.

A shorter video just showing the position hold capability of the system is seen below.

The first video made after the project was started included the first research within the necessary image processing algorithms. This video can be seen below.

After the first success with the camera stream decoding and color segmentation a video to show the success was made. Even though this video has a similar content to the video above explaining the image processing steps in Danish, you can see the success below.

Project downloads

The full project report, unfortunately only available in danish, can be downloaded here: Nødsystem til orientering af indendørs droner

All project material including both VHDL source code, Picoblaze assembly, schematics and PCB design, can be downloaded here: Full project materials download

If you are only interested in the organized source code (VHDL + Assembly) also including test-benches code and the Spartan-3 image processing code only, an organized source code zip-file can be downloaded here: Organized source code download

The included VHDL code is divided into separate instantiable modules given by their individual .VHD files.

Recent Comments